Unregulated Artificial Intelligence IS the Biggest Threat to Humanity

WEBSITE LINK HERE, BETTER FORMAT. THIS ARTICLE IS LONGER THAT MOST: IT IS ABOUT A 15-MINUTE READ, MAYBE TAKE IT IN TWO CHUNKS. THE TOPIC IS TOO SERIOUS NOT TO READ THROUGH

*

Generative artificial intelligence is the term used when a computer engineer or software engineer programs a computer to learn on its own. It is akin to having a student progress from first grade through high school.

There is one big fear (Not the only fear) but one dominant fear. Once a program is developed such that a computer - on its own - decides to implement a better code to learn, and - having successfully learned the task, it cycles through better and better codes, getting more intelligent each time. It can go through 5,000 cycles overnight and reaches super intelligence that could dominate the earth.

What then?

From CNBC in 2018, reporting on Elon Musk’s warning:

The billionaire tech entrepreneur called AI more dangerous than nuclear warheads and said there needs to be a regulatory body overseeing the development of super intelligence, speaking at the South by Southwest tech conference in Austin, Texas on Sunday.

In 2020, from Google:

Musk told an audience at an MIT Aeronautics and Astronautics symposium that year that AI was “our biggest existential threat,” and humanity needs to be extremely careful: With artificial intelligence we are summoning the demon.

Elon Musk is one of the thousands of programmers and engineers who hold the same view. He just has a bigger voice.

Below is just one example of the exquisite research being done, this one - ironically, at Google.

From Science.Org, in April of 2021:

So Quoc Le, a computer scientist at Google, and colleagues developed a program called AutoML-Zero that could develop AI programs with effectively zero human input, using only basic mathematical concepts a high school student would know. "Our ultimate goal is to actually develop novel machine learning concepts that even researchers could not find," he says.

The program discovers algorithms using a loose approximation of evolution. It starts by creating a population of 100 candidate algorithms by randomly combining mathematical operations. It then tests them on a simple task, such as an image recognition problem where it has to decide whether a picture shows a cat or a truck.

Okay. A cat or truck. But what might happen when the goal is to evolve the most effective means of increasing generative artificial intelligence? A scientist might harmlessly set the program running late in the day and turn out the lights, only to find out the next morning that the program found the answer at 11:00 p.m. and spent the rest of the time learning, running 20 million cycles, getting smarter with each one. The computer - seemingly alive at this point - believes it can run the world better than people.

That is the nightmare, and it’s why Elon Musk said that Generative AI is more dangerous to humans than nuclear weapons.

There are two types of A.I. Specific and Generative: Specific poses less of a threat. It is wholly focused on the difference between cars and trees in the center lane or whatever “closed task” you choose. Generative A.I. is simply teaching a computer to learn anything and everything faster, teaching it to think, understand the task, to solve problems humans weren’t even aware of, including why it’s being done, and perhaps even develop a form of consciousness. Unregulated, Generative A.I. is the dangerous one.

There is a ton of good that could come out of Generative A.I., and that’s why so much research is going into it. The goal would be to give one computer any task and ask it to find the most efficient means to fix and oversee it. (Such as programming it to find the most efficient U.S. Electric grid, the most needed highways to improve efficiency, or running the U.S. airspace (planes) so traffic moves more efficiently.

Or, go for the gold, integrate humanity and A.I.

Many computer scientists believe that artificial intelligence will someday make it possible to download human consciousness and experience into a computer platform, a robot. They’re not talking about 5,000 years’ time. They’re talking about 100 years; 200 years would be a “long time.” This wouldn’t be putting “all you know” in a robot. This is “you,” as you think right now, with a robotic body and far more recall and ability to solve problems, using perhaps all the knowledge known to the world. It eliminates disease, only upkeep (And fear of trauma). There would be cities in Antarctica where the cold will make computing efficiency that much easier. And nearly every rocky planet or moon becomes inhabitable. You can do a spacewalk by just stepping out of your vehicle, or you can walk the moon. If your arm is ripped off in an accident, no big deal, a new arm will fit into the same socket. You could live 100,000 years with maintenance and upgrades.

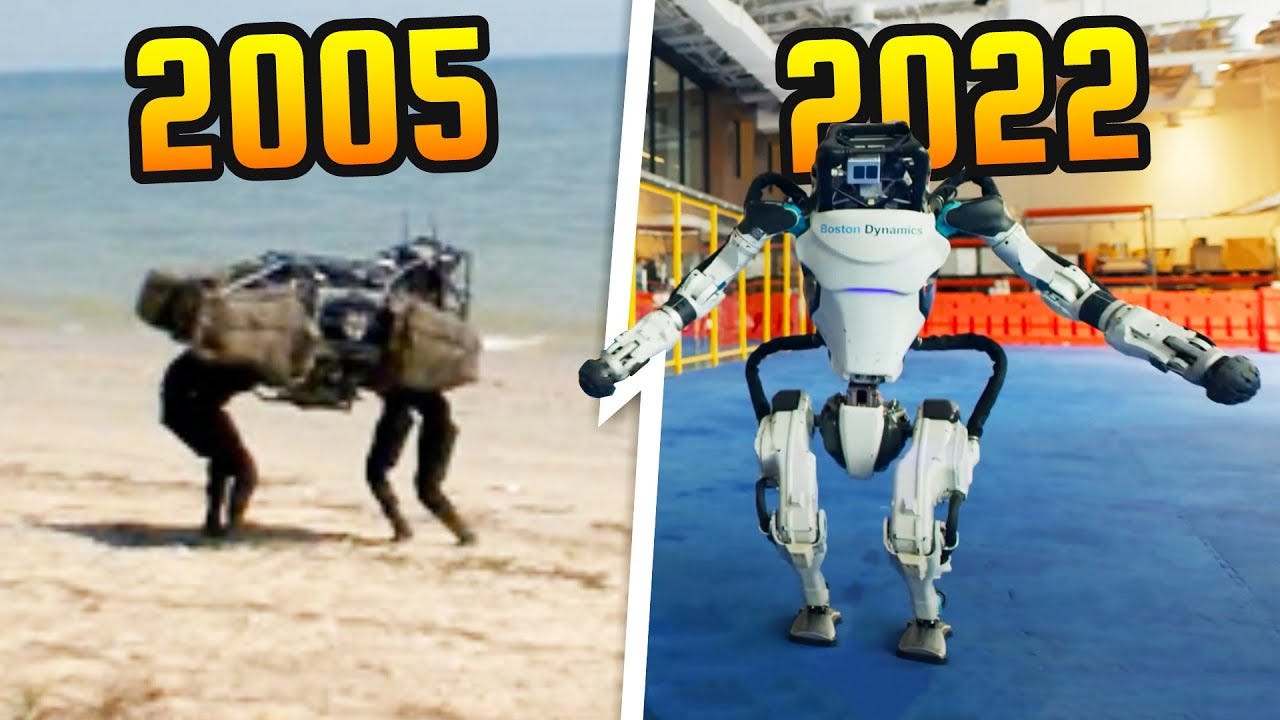

Of course, even in the “good descriptions,” one can see the dangers lurking just below the surface, and we must face them beforehand because it may be impossible afterward. From Boston Dynamics, we see the evolution in robotic engineering, which runs side by side with computer engineering. What happens when we program them to maximize their cognitive ability?

PROBLEMS: Seven (Short)

Stuart Armstrong from the Future of Life Institute has spoken of AI as an “extinction risk” were it to go rogue. Even nuclear war, he said, is on a different level destruction-wise because it would “kill only a relatively small proportion of the planet.” Ditto pandemics, “even at their more virulent.”

A SHORT LIST OF SEVEN “Contained” DANGERS:

First: JOB LOSS AT UNSUSTAINABLE LEVELS

There is no doubt that at some point, developed countries and then the world will have to develop some kind of Universal Basic Income, “UBI.” Everyone will get UBI, even the wealthy. But people will have the option to try to earn more. Speculation is that the arts will be wide open. Writing. Expressing one’s humanity in any form will be valuable. And, of course, there will be the .01% that own the companies developing the robots, more powerful than most nations, and have every reason to share some of that wealth, lest people simply overrun the ultra-greedy.

Don’t make the mistake of thinking that only low-wage jobs will be extinguished. Medicine is already highly reliant on artificial intelligence in diagnosis and certain delicate surgical problems, with a physician operating with robotic assistance. Law could easily be much the same. Spit in facts, it finds caselaw and persuasively presents it. A human or conscious robot determines - on the basis of all case law and statutes programmed in its head, who would win in traditional law. Accountants, traders, even banks…

Of course, there will never be a server, driver, assembly, or delivery person ever again. Teachers?

MALICIOUS USE OF AI: PRIVACY, SECURITY:

We have already seen the impact of facial recognition, whether it is used as a security measure to get inside a highly secure building or on the streets of London and Shanghai. China leads the world in dangerous use.

Not only does China’s system look for criminals (some of whom are political dissidents), the system is linked to everything about their lives, medical, family, all of it. It is hard to imagine it ever developing in the United States, but we’ve said that a lot recently, only to see it happen. After all, we already give up a lot of privacy carrying a phone in our hands.

BIAS

We have already covered UBI and job loss. The bias may arise from the fact that AI may send the world spiraling into a male-dominated society because it has mainly been produced by men of all colors and comparatively few women and handicapped workers. That bias may dominate some answers to problems. “Men.”

Of course, AI might eliminate a lot of handicaps.

And the expected: Global Risk, War

A.I. armies could almost be justified. The country owes it to those who serve to do all it can to cut down on the risk of death and injury. Of course, the risk of death and injury has prevented many wars. The country and personnel pay the ultimate cost. If A.I.-created robots or killing machines take away much of the risk, then war is easier to start with lessened risk, which doesn’t help civilians that may still be collateral damage.

When Musk referenced A.I. as more dangerous than nuclear war, he didn’t even include the possibility that A.I. could start a nuclear war.

ECONOMIC CHAOS

All large investment banks already use algorithms to trade second by second. The stock market - to some - is not about investing in good companies with good growth potential as savings. It is a mining operation, with the computers running as bulldozers and dump trucks (driven by robots, soon), with fine gold shaken out by millisecond to millisecond trades.

Truly, think of the big banks as miners because that’s all they’re doing, skimming the surface of the stock market, second to second, every day, to skim profit off your savings.

The programs running these trades were developed by people and are plenty efficient. What happens when A.I. determines the most efficient means in any situation? A bank would only have to employ a few dozen people (UBI) and make ten times the profit with second-by-second trades watched over by maybe two to three traders to recognize if the program has been beaten by a challenger.

*

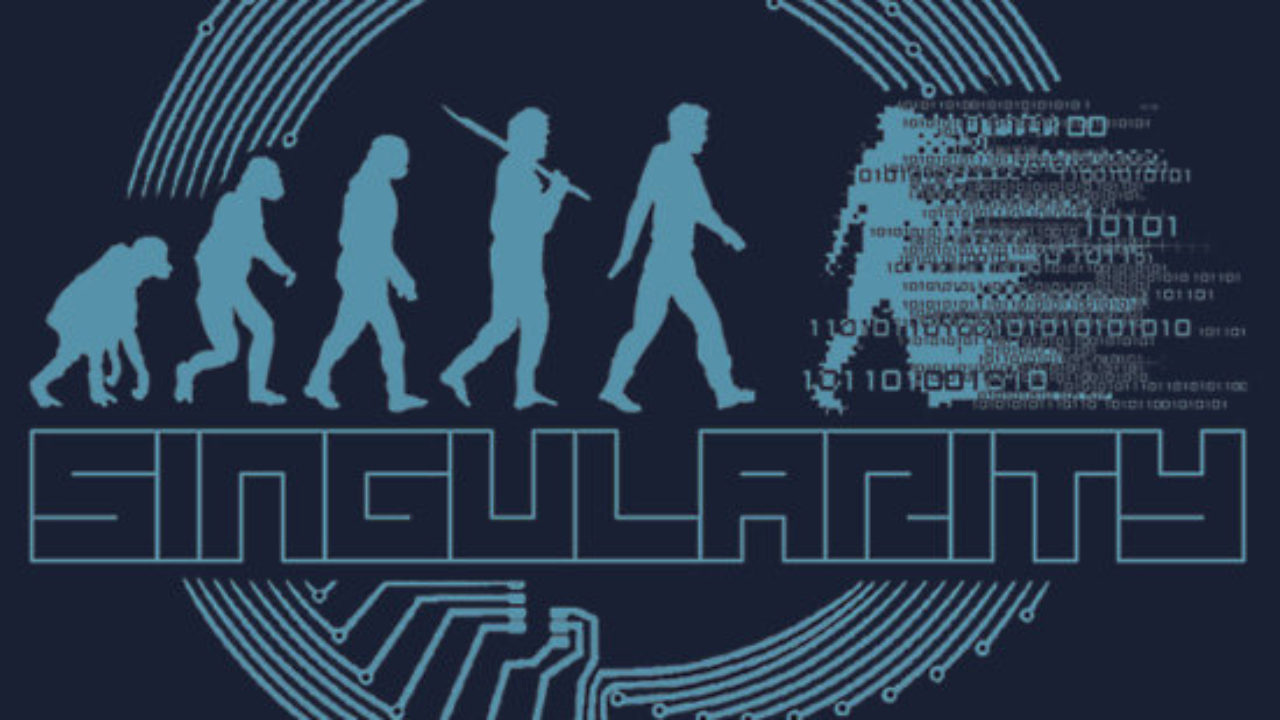

The unthinkable. The inevitable? The singularity?

SINGULARITY: (To avoid competing definitions, I’ll use Wiki’s definition, it will sound very familiar because we’ve noted hints above):

The Singularity is a hypothetical future point in time at which technological growth becomes uncontrollable and irreversible, resulting in unforeseeable changes to human civilization.

According to the most popular version of the singularity hypothesis, I.J. Good's intelligence explosion model, an upgradable intelligent agent will eventually enter a "runaway reaction" of self-improvement cycles, each new and more intelligent generation appearing more and more rapidly, causing an "explosion" in intelligence and resulting in a powerful superintelligence that qualitatively far surpasses all human intelligence.

No one doubts that the singularity is out there. The arguments focus on whether it’s 250 years away, regulated, contained, planned out, and a known date. Or those that believe it could be 20 years away and no one will see it coming. Musk is in the second camp.

Many believe that this type of intelligence will make computers conscious of their own existence and scared of being turned off. There is talk of having to give certain conscious computers civil rights. There is also talk that robots with Super A.I. (With our consciousness or not) represent humanity’s inevitable next evolutionary step.

MANAGING RISK/REGULATION

Nearly everyone agrees that A.I. needs to be regulated to prevent some of the dangers above. There are strong disagreements on how to do it:

First: Some want to regulate research. If one thinks about it, the biggest dangers to the most immediate problems are likely to spring from a surprise during research; one linked to the net. Once loose, the only way to stop it would be to shut down the entire net and build a new one. Hello 1950s!

Second: Some abhor regulation of research and only want regulation after the fact and marketable. But again, “after the fact” might be too late with some programs. Once turned on, a poorly understood program becomes well-understood and is tearing up large chunks of the net, including banking, power, and weapons, from all countries.

Last: Regulation by who? Congress? Are they expected to understand? Regulation by experts in the field? A.I., scientists who might understand and are already thinking about their “less dangerous” program? By computing companies themselves? With profit motives to consider? All of the above?

A country somewhere will advertise itself as an A.I. Research Free Zone, as has happened in medicine. All regulations everywhere else could become nearly meaningless.

SUMMARY***

DAVOS SWITZERLAND (I have always wanted to cover Davos), 2023

Davos, where the world’s largest titans of industry convene every winter to luxuriate in their own importance and discuss serious issues of the day. The biggest topic this year? Generative A.I.

From The Economic Times:

DAVOS: Business titans trudging through Alpine snow can't stop talking about a chatbot from San Francisco.

Generative artificial intelligence, tech that can invent virtually any content someone can think up and type into a text box, is garnering not just venture investment in Silicon Valley but interest in Davos at the World Economic Forum's annual meeting this week.

Karp told Reuters in Davos, "The idea that an autonomous thing could generate results is basically obviously useful for war."

The country that advances the fastest in AI capabilities is "going to define the law of the land," Karp said, adding that it was worth asking how tech would play a role in any conflict with China.

If the most powerful people on Earth are both worried about it and excited about it, you should become conversant in artificial intelligence. It is knocking at the door, and no one knows what is on the other side.

****

This one might’ve run a little too long, but most people aren’t conversant as to where things stand. Some are. Hence the warning up top.

I take a lot of pride in how much work I put into these. Some people put out two clever paragraphs and make a fortune. I’ve always thought that if you ask for money, you best earn it. Any pledges are appreciated. As of Feb. 1st, articles like these may include the introduction of the topic and the problems to pledges.

Have a good weekend, maybe in the South Pacific: